LU decomposition

From CFD-Wiki

m (→Algorithm) |

(small typo fixed: first we solve intermediary vectory y and then x based on this y) |

||

| (7 intermediate revisions not shown) | |||

| Line 16: | Line 16: | ||

we will be able to find the solution to the original system by solving | we will be able to find the solution to the original system by solving | ||

| - | :<math>U\vec{x} = \vec{ | + | :<math>U\vec{x} = \vec{y}</math> |

The first solution is a foward substitution, while the second solution is a backward substitution. Both can be done efficiently once the factorization is available. The forward substitution may be expressed as | The first solution is a foward substitution, while the second solution is a backward substitution. Both can be done efficiently once the factorization is available. The forward substitution may be expressed as | ||

| Line 29: | Line 29: | ||

x_i = {1 \over {u_{ii} }}\left( {y_i - \sum\limits_{j = i + 1}^n {u_{ij} x_j } } \right), | x_i = {1 \over {u_{ii} }}\left( {y_i - \sum\limits_{j = i + 1}^n {u_{ij} x_j } } \right), | ||

i = n,n-1,\ldots,1.</math> | i = n,n-1,\ldots,1.</math> | ||

| + | |||

| + | |||

| + | LU decomposition essentially stores the operations of Gaussian elimination in "higher-level" form (see [[#References|Golub and Van Loan]]), so repeated solutions using the same left-hand side are computed without repetition of operations that are independent of the right-hand side. | ||

== Algorithm == | == Algorithm == | ||

| Line 51: | Line 54: | ||

If one has a single lefthand-side matrix and many right-hand side vectors, then LU decomposition would be a good solution procedure to consider. At the very least, it should be faster than solving each system separately with Gaussian elimination. | If one has a single lefthand-side matrix and many right-hand side vectors, then LU decomposition would be a good solution procedure to consider. At the very least, it should be faster than solving each system separately with Gaussian elimination. | ||

| + | |||

| + | Here a Fortran code fragment for LU decomposition written by D. Partenov | ||

| + | |||

| + | do j = 1, n | ||

| + | do i = 1, j | ||

| + | if (i == 1) then | ||

| + | a(i,j) = a(i, j) | ||

| + | else | ||

| + | suma = 0.0 | ||

| + | do k = 1, i-1 | ||

| + | suma = suma + a(i,k)*a(k,j) | ||

| + | end do | ||

| + | a(i,j) = a(i,j) - suma | ||

| + | end if | ||

| + | end do | ||

| + | if ( j < n) then | ||

| + | do s = 1, n-j | ||

| + | i = j + s | ||

| + | if (j == 1) then | ||

| + | a(i,j) = a(i,j)/a(j,j) | ||

| + | else | ||

| + | suma = 0.0 | ||

| + | do k = 1, j-1 | ||

| + | suma = suma + a(i,k)*a(k,j) | ||

| + | end do | ||

| + | a(i,j) = (a(i,j) - suma)/a(j,j) | ||

| + | end if | ||

| + | end do | ||

| + | end if | ||

| + | end do | ||

| + | y(1) = b(1) | ||

| + | do i = 2, n | ||

| + | suma = 0.0 | ||

| + | do j = 1, i-1 | ||

| + | suma = suma + a(i,j)*y(j) | ||

| + | end do | ||

| + | y(i) = b(i) - suma | ||

| + | end do | ||

| + | i = n | ||

| + | j = n | ||

| + | x(i) = y(i)/a(i,j) | ||

| + | do s = 1, n -1 | ||

| + | i = n - s | ||

| + | suma = 0.0 | ||

| + | do j = i+1, n | ||

| + | suma = suma + a(i,j)*x(j) | ||

| + | end do | ||

| + | x(i) = (y(i) - suma)/a(i,i) | ||

| + | end do | ||

| + | |||

| + | == References == | ||

| + | *{{reference-book|author=Golub and Van Loan|year=1996|title=Matrix Computations|rest= 3rd edition, The Johns Hopkins University Press, Baltimore}} | ||

| + | *[http://en.wikipedia.org/wiki/LU_decomposition Wikipedia article ''LU decomposition] | ||

Latest revision as of 09:22, 21 November 2011

Contents |

Description

Consider the system of equations  , where

, where  is an

is an  nonsingular matrix.

nonsingular matrix.  may be decomposed into an lower triangular part

may be decomposed into an lower triangular part  and an upper triangular part

and an upper triangular part  that will lead us to a direct procedure for the solution of the original system. This decomposition procedure is quite useful when more than one right-hand side (more than one

that will lead us to a direct procedure for the solution of the original system. This decomposition procedure is quite useful when more than one right-hand side (more than one  ) is to be used.

) is to be used.

The algorithm is relatively straightforward - first, we determine the upper and lower triangular parts:

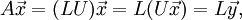

Then,

where  . Once we solve the system

. Once we solve the system

we will be able to find the solution to the original system by solving

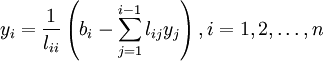

The first solution is a foward substitution, while the second solution is a backward substitution. Both can be done efficiently once the factorization is available. The forward substitution may be expressed as

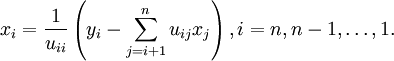

and the backward substitution process may be expressed as

LU decomposition essentially stores the operations of Gaussian elimination in "higher-level" form (see Golub and Van Loan), so repeated solutions using the same left-hand side are computed without repetition of operations that are independent of the right-hand side.

Algorithm

Add factorization here

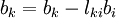

Forward substitution

- for k:=1 step until n do

- for i:=1 step until k-1

-

- end loop (i)

-

- for i:=1 step until k-1

- end loop (k)

Backward substitution

- for k:=n stepdown until 1 do

- for i:=k+1 step until n

-

- end loop (i)

-

- for i:=k+1 step until n

- end loop (k)

Important Considerations

As with Gaussian elimination, LU decomposition is probably best used for relatively small, relatively non-sparse systems of equations (with small and non-sparse open to some interpretation). For larger and/or sparse problems, it would probably be best to either use an iterative method or use a direct solver package (e.g. DSCPACK) as opposed to writing one of your own.

If one has a single lefthand-side matrix and many right-hand side vectors, then LU decomposition would be a good solution procedure to consider. At the very least, it should be faster than solving each system separately with Gaussian elimination.

Here a Fortran code fragment for LU decomposition written by D. Partenov

do j = 1, n

do i = 1, j

if (i == 1) then

a(i,j) = a(i, j)

else

suma = 0.0

do k = 1, i-1

suma = suma + a(i,k)*a(k,j)

end do

a(i,j) = a(i,j) - suma

end if

end do

if ( j < n) then

do s = 1, n-j

i = j + s

if (j == 1) then

a(i,j) = a(i,j)/a(j,j)

else

suma = 0.0

do k = 1, j-1

suma = suma + a(i,k)*a(k,j)

end do

a(i,j) = (a(i,j) - suma)/a(j,j)

end if

end do

end if

end do

y(1) = b(1)

do i = 2, n

suma = 0.0

do j = 1, i-1

suma = suma + a(i,j)*y(j)

end do

y(i) = b(i) - suma

end do

i = n

j = n

x(i) = y(i)/a(i,j)

do s = 1, n -1

i = n - s

suma = 0.0

do j = i+1, n

suma = suma + a(i,j)*x(j)

end do

x(i) = (y(i) - suma)/a(i,i)

end do

References

- Golub and Van Loan (1996), Matrix Computations, 3rd edition, The Johns Hopkins University Press, Baltimore.

- Wikipedia article LU decomposition