Probability density function

From CFD-Wiki

| Line 26: | Line 26: | ||

\int P(\Phi) d \Phi = 1 | \int P(\Phi) d \Phi = 1 | ||

</math> | </math> | ||

| - | Integrating over all the possible values of <math> \phi </math>. | + | Integrating over all the possible values of <math> \phi </math>, |

| + | <math> \Phi </math> is the sample space of the scalar variable <math> \phi </math>. | ||

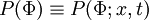

The PDF of any stochastic variable depends "a-priori" on space and time. | The PDF of any stochastic variable depends "a-priori" on space and time. | ||

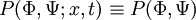

:<math> P(\Phi;x,t) </math> | :<math> P(\Phi;x,t) </math> | ||

| + | for clarity of notation, the space and time dependence is dropped. | ||

| + | <math> P(\Phi) \equiv P(\Phi;x,t) </math> | ||

| + | |||

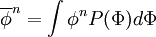

From the PDF of a variable, one can define its <math> n </math>th moment as | From the PDF of a variable, one can define its <math> n </math>th moment as | ||

| Line 55: | Line 59: | ||

For two variables (or more) a joint-PDF of <math> \phi </math> and <math> \psi </math> is defined | For two variables (or more) a joint-PDF of <math> \phi </math> and <math> \psi </math> is defined | ||

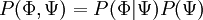

| - | :<math> P(\Phi,\Psi;x,t) </math> | + | :<math> P(\Phi,\Psi;x,t) \equiv P (\Phi,\Psi) </math> |

| - | and the marginal PDF's are | + | where <math> \Phi \mbox{ and } \Psi </math> form the phase-space for |

| + | <math> \phi \mbox{ and } \psi </math>. | ||

| + | The marginal PDF's are obtained by integration over the sample space of one variable. | ||

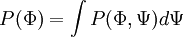

:<math> | :<math> | ||

P(\Phi) = \int P(\Phi,\Psi) d\Psi | P(\Phi) = \int P(\Phi,\Psi) d\Psi | ||

| Line 75: | Line 81: | ||

</math> | </math> | ||

where <math> P(\Phi|\Psi) </math> is the conditional PDF. | where <math> P(\Phi|\Psi) </math> is the conditional PDF. | ||

| + | |||

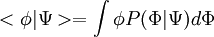

| + | The conditional average of a scalar can be expressed as a function of the | ||

| + | conditional PDF | ||

| + | :<math> | ||

| + | <\phi | \Psi > = \int \phi P(\Phi|\Psi) d \Phi | ||

| + | </math> | ||

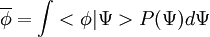

| + | and the mean value of a scalar can be expressed | ||

| + | |||

| + | :<math> | ||

| + | \overline{\phi} = \int <\phi | \Psi > P(\Psi) d \Psi | ||

| + | </math> | ||

| + | only if <math> \phi </math> and <math> \psi </math> are correlated. | ||

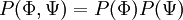

If two variables are uncorrelated then they are statistically independent and their joint PDF can be expressed as a product of their marginal PDFs. | If two variables are uncorrelated then they are statistically independent and their joint PDF can be expressed as a product of their marginal PDFs. | ||

Revision as of 13:37, 19 October 2005

Stochastic methods use distribution functions to decribe the fluctuacting scalars in a turbulent field.

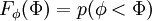

The distribution function  of a scalar

of a scalar  is the probability

is the probability

of finding a value of

of finding a value of

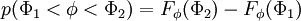

The probability of finding  in a range

in a range  is

is

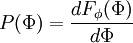

The probability density function (PDF) is

where  is the probability of

is the probability of  being in the range

being in the range  . It follows that

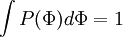

. It follows that

Integrating over all the possible values of  ,

,

is the sample space of the scalar variable

is the sample space of the scalar variable  .

The PDF of any stochastic variable depends "a-priori" on space and time.

.

The PDF of any stochastic variable depends "a-priori" on space and time.

for clarity of notation, the space and time dependence is dropped.

From the PDF of a variable, one can define its  th moment as

th moment as

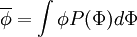

the  case is called the "mean".

case is called the "mean".

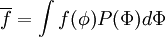

Similarly the mean of a function can be obtained as

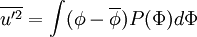

Where the second central moment is called the "variance"

For two variables (or more) a joint-PDF of  and

and  is defined

is defined

where  form the phase-space for

form the phase-space for

.

The marginal PDF's are obtained by integration over the sample space of one variable.

.

The marginal PDF's are obtained by integration over the sample space of one variable.

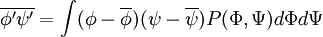

For two variables the correlation is given by

This term often appears in turbulent flows the averaged Navier-Stokes (with  ) and is unclosed.

) and is unclosed.

Using Bayes' theorem a joint-pdf can be expressed as

where  is the conditional PDF.

is the conditional PDF.

The conditional average of a scalar can be expressed as a function of the conditional PDF

and the mean value of a scalar can be expressed

only if  and

and  are correlated.

are correlated.

If two variables are uncorrelated then they are statistically independent and their joint PDF can be expressed as a product of their marginal PDFs.