Successive over-relaxation method - SOR

From CFD-Wiki

(Difference between revisions)

m (Successive over-relaxation method moved to Successive over-relaxation method - SOR) |

(fixed dot product notation) |

||

| Line 1: | Line 1: | ||

We seek the solution to set of linear equations: <br> | We seek the solution to set of linear equations: <br> | ||

| - | :<math> A \ | + | :<math> A \cdot X = Q </math> <br> |

For the given matrix '''A''' and vectors '''X''' and '''Q'''. <br> | For the given matrix '''A''' and vectors '''X''' and '''Q'''. <br> | ||

Revision as of 20:33, 15 December 2005

We seek the solution to set of linear equations:

For the given matrix A and vectors X and Q.

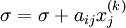

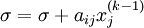

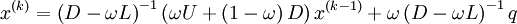

In matrix terms, the definition of the SOR method can be expressed as :

Where D,L and U represent the diagonal, lower triangular and upper triangular matrices of coefficient matrix A and k is iteration counter.

is extrapolation factor.

is extrapolation factor.

The pseudocode for the SOR algorithm:

Algorithm

- Chose an intital guess

to the solution

to the solution

- for k := 1 step 1 untill convergence do

- for i := 1 step until n do

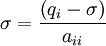

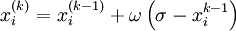

-

- for j := 1 step until i-1 do

-

- end (j-loop)

- for j := i+1 step until n do

-

- end (j-loop)

-

-

-

- end (i-loop)

- check if convergence is reached

- for i := 1 step until n do

- end (k-loop)

Return to Numerical Methods