Introduction to turbulence/Statistical analysis/Ensemble average

From CFD-Wiki

(→The mean or ensemble average) |

(→Fluctuations about the mean) |

||

| Line 49: | Line 49: | ||

== Fluctuations about the mean == | == Fluctuations about the mean == | ||

| - | It is often important to know how a random variable is distributed about the mean. For example, figure 2.1 illustrates portions of two random functions of time which have identical means, but are | + | It is often important to know how a random variable is distributed about the mean. For example, figure 2.1 illustrates portions of two random functions of time which have identical means, but are obviously members of different ensembles since the amplitudes of their fluctuations are not distributed the same. it is possible to distinguish between them by examining the statistical properties of the fluctuations about the mean (or simply the fluctuations) defined by: |

<table width="100%"><tr><td> | <table width="100%"><tr><td> | ||

| Line 83: | Line 83: | ||

| - | It is straightforward to show from | + | It is straightforward to show from equation 2.2 that the variance in equation 2.6 can be written as |

<table width="100%"><tr><td> | <table width="100%"><tr><td> | ||

| Line 94: | Line 94: | ||

| - | The variance can also referred to as the ''second central moment of x''. The word central implies that the mean has been | + | The variance can also referred to as the ''second central moment of x''. The word central implies that the mean has been subtracted off before squaring and averaging. The reasons for this will be clear below. If two random variables are identically distributed, then they must have the same mean and variance. |

| - | The variance is closely related to another statistical quantity called the '' | + | The variance is closely related to another statistical quantity called the ''standard deviation'' or root mean square (''rms'') value of the random variable <math> x </math> , which is denoted by the symbol, <math> \sigma_{x} </math>. Thus, |

<table width="100%"><tr><td> | <table width="100%"><tr><td> | ||

| Line 113: | Line 113: | ||

---------------------------------------- | ---------------------------------------- | ||

| - | |||

== Higher moments == | == Higher moments == | ||

Revision as of 19:05, 19 February 2007

Contents |

The ensemble and Ensemble Average

The mean or ensemble average

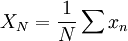

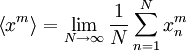

The concept of an ensebmble average is based upon the existence of independent statistical event. For example, consider a number of inviduals who are simultaneously flipping unbiased coins. If a value of one is assigned to a head and the value of zero to a tail, then the average of the numbers generated is defined as

|

| (2) |

where our  th flip is denoted as

th flip is denoted as  and

and  is the total number of flips.

is the total number of flips.

Now if all the coins are the same, it doesn't really matter whether we flip one coin  times, or

times, or  coins a single time. The key is that they must all be independent events - meaning the probability of achieving a head or tail in a given flip must be completely independent of what happens in all the other flips. Obviously we can't just flip one coin and count it

coins a single time. The key is that they must all be independent events - meaning the probability of achieving a head or tail in a given flip must be completely independent of what happens in all the other flips. Obviously we can't just flip one coin and count it  times; these cleary would not be independent events

times; these cleary would not be independent events

Unless you had a very unusual experimental result, you probably noticed that the value of the  's was also a random variable and differed from ensemble to ensemble. Also the greater the number of flips in the ensemle, the closer you got to

's was also a random variable and differed from ensemble to ensemble. Also the greater the number of flips in the ensemle, the closer you got to  . Obviously the bigger

. Obviously the bigger  , the less fluctuation there is in

, the less fluctuation there is in

Now imagine that we are trying to establish the nature of a random variable  . The

. The  th realization of

th realization of  is denoted as

is denoted as  . The ensemble average of

. The ensemble average of  is denoted as

is denoted as  (or

(or  ), and is defined as

), and is defined as

|

| (2) |

Obviously it is impossible to obtain the ensemble average experimentally, since we can never achieve an infinite number of independent realizations. The most we can ever obtain is the arithmetic mean for the number of realizations we have. For this reason the arithmetic mean can also referred to as the estimator for the true mean ensemble average.

Even though the true mean (or ensemble average) is unobtainable, nonetheless, the idea is still very useful. Most importantly,we can almost always be sure the ensemble average exists, even if we can only estimate what it really is. The fact of its existence, however, does not always mean that it is easy to obtain in practice. All the theoretical deductions in this course will use this ensemble average. Obviously this will mean we have to account for these "statistical differenced" between true means and estimates when comparing our theoretical results to actual measurements or computations.

In general, the  could be realizations of any random variable. The

could be realizations of any random variable. The  defined by equation 2.2 represents the ensemble average of it. The quantity

defined by equation 2.2 represents the ensemble average of it. The quantity  is sometimes referred to as the expected value of the random variables

is sometimes referred to as the expected value of the random variables  , or even simple its mean.

, or even simple its mean.

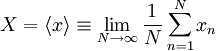

For example, the velocity vector at a given point in space and time  , in a given turbulent flow can be considered to be a random variable, say

, in a given turbulent flow can be considered to be a random variable, say  . If there were a large number of identical experiments so that the

. If there were a large number of identical experiments so that the  in each of them were identically distributed, then the ensemble average of

in each of them were identically distributed, then the ensemble average of  would be given by

would be given by

|

| (2) |

Note that this ensemble average,  , will , in general, vary with independent variables

, will , in general, vary with independent variables  and

and  . It will be seen later, that under certain conditions the ensemble average is the same as the average which would be generated by averaging in time. Even when a time average is not meaningful, however, the ensemble average can still be defined; e.g., as in non-stationary or periodic flow. Only ensemble averages will be used in the development of the turbulence equations here unless otherwise stated.

. It will be seen later, that under certain conditions the ensemble average is the same as the average which would be generated by averaging in time. Even when a time average is not meaningful, however, the ensemble average can still be defined; e.g., as in non-stationary or periodic flow. Only ensemble averages will be used in the development of the turbulence equations here unless otherwise stated.

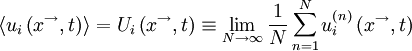

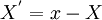

Fluctuations about the mean

It is often important to know how a random variable is distributed about the mean. For example, figure 2.1 illustrates portions of two random functions of time which have identical means, but are obviously members of different ensembles since the amplitudes of their fluctuations are not distributed the same. it is possible to distinguish between them by examining the statistical properties of the fluctuations about the mean (or simply the fluctuations) defined by:

|

| (2) |

It is easy to see that the average of the fluctuation is zero, i.e.,

|

| (2) |

On the other hand, the ensemble average of the square of the fluctuation is not zero. In fact, it is such an important statistical measure we give it a special name, the variance, and represent it symbolically by either ![var \left[ x \right]](/W/images/math/d/1/c/d1c43f684f5952bdd96132dfa2ada32a.png) or

or  The variance is defined as

The variance is defined as

|

| (2) |

|

| (2) |

Note that the variance, like the ensemble average itself, can never really be measured, since it would require an infinite number of members of the ensemble.

It is straightforward to show from equation 2.2 that the variance in equation 2.6 can be written as

|

| (2) |

Thus the variance is the second-moment minus the square of the first-moment (or mean). In this naming convention, the ensemble mean is the first moment.

The variance can also referred to as the second central moment of x. The word central implies that the mean has been subtracted off before squaring and averaging. The reasons for this will be clear below. If two random variables are identically distributed, then they must have the same mean and variance.

The variance is closely related to another statistical quantity called the standard deviation or root mean square (rms) value of the random variable  , which is denoted by the symbol,

, which is denoted by the symbol,  . Thus,

. Thus,

|

| (2) |

or

|

| (2) |

Higher moments

Figure 2.2 illustrates tow random variables of time which have the same mean and also the same variances, but clearly they are still quite different. It is useful, therefore, to define higher moments of the distribution to assist in distinguishing these differences.

The  -th moment of the random variable is defined as

-th moment of the random variable is defined as

|

| (2) |

It is usually more convenient to work with the central moments defined by:

|

| (2) |

The central moments give direct information on the distribution of the values of the random variable about the mean. It is easy to see that the variance is the second central moment (i.e.,  ).

).

![var \left[ x \right] \equiv \left\langle \left( x^{'} \right) ^{2} \right\rangle = \left\langle \left[ x - X \right]^{2} \right\rangle](/W/images/math/8/a/0/8a0122b6bb2645f2dbd986a50dc0d485.png)

![= \lim_{N\rightarrow \infty} \frac{1}{N} \sum^{N}_{n=1} \left[ x_{n} - X \right]^{2}](/W/images/math/3/b/d/3bd51fe3a21e39adbfe74ee96de9f7b0.png)

![var \left[ x \right] = \left\langle x^{2} \right\rangle - X^{2}](/W/images/math/9/7/1/971cd6d72286950f7f1c395617e91da2.png)

![\sigma_{x} \equiv \left( var \left[ x \right] \right)^{1/2}](/W/images/math/b/8/3/b83e9f7766f17d09d9126f073737b8ee.png)

![\sigma^{2}_{x} = var \left[ x \right]](/W/images/math/b/2/6/b269ac8c4d359055eded6a221674aa89.png)

![\left\langle \left( x^{'} \right)^{m} \right\rangle = \left\langle \left( x-X \right)^{m} \right\rangle = \lim_{N \rightarrow \infty} \frac{1}{N} \sum^{N}_{n=1} \left[x_{n} - X \right]^{m}](/W/images/math/7/8/f/78fbfd6b3d09595140c0bf969fd7b404.png)