Finite difference

From CFD-Wiki

m (Finite differences moved to Finite difference) |

(Transferred from wikipedia) |

||

| Line 1: | Line 1: | ||

| - | + | In [[mathematics]], a '''finite difference''' is like a differential quotient, except that it uses finite quantities instead of infinitesimal ones. | |

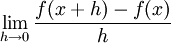

| - | {{ | + | The [[derivative]] of a function ''f'' at a point ''x'' is defined by the [[limit (mathematics)|limit]] |

| + | :<math> \lim_{h\to0} \frac{f(x+h) - f(x)}{h} </math>. | ||

| + | If ''h'' has a fixed (non-zero) value, instead of approaching zero, this quotient is called a ''finite difference''. | ||

| + | |||

| + | ==Calculus of finite differences== | ||

| + | |||

| + | One important aspect of finite differences is that it is analogous to the derivative. This means that [[difference operator]]s, mapping the function ''f'' to a finite difference, can be used to construct a [[calculus of finite differences]], which is similar to the [[differential calculus]] constructed from [[differential operator]]s. | ||

| + | |||

| + | ==Numerical analysis== | ||

| + | |||

| + | Another important aspect is that finite differences approach differential quotients as ''h'' goes to zero. Thus, we can use finite differences to approximate derivatives. This is often used in [[numerical analysis]], especially in [[numerical ordinary differential equations]] and [[numerical partial differential equations]], which aim at the numerical solution of [[ordinary differential equation|ordinary]] and [[partial differential equation]]s respectively. The resulting methods are called ''finite-difference methods''. | ||

| + | |||

| + | For example, consider the ordinary differential equation | ||

| + | :<math> u'(x) = 3u(x) + 2. \, </math> | ||

| + | The [[Numerical ordinary differential equations#The Euler method|Euler method]] for solving this equation uses the finite difference | ||

| + | :<math>\frac{u(x+h) - u(x)}{h} \approx u'(x)</math> | ||

| + | to approximate the differential equation by | ||

| + | :<math> u(x+h) = u(x) + h(3u(x)+2). \, </math> | ||

| + | The last equation is called a '''finite-difference equation'''. Solving this equation gives an approximate solution to the differential equation. | ||

| + | |||

| + | The error between the approximate solution and the true solution is determined by the error that is made by going from a differential operator to a difference operator. This error is called the ''discretization error'' or ''truncation error'' (the term ''truncation error'' reflects the fact that a difference operator can be viewed as a finite part of the infinite [[Taylor series]] of the differential operator). | ||

| + | |||

| + | == Example: the heat equation == | ||

| + | |||

| + | Consider the normalized [[heat equation]] in one dimension, with homogeneous [[Dirichlet boundary condition]]s | ||

| + | |||

| + | :<math> U_t=U_{xx} \, </math> | ||

| + | :<math> U(0,t)=U(1,t)=0 \, </math> (boundary condition) | ||

| + | :<math> U(x,0) =U_0(x) \, </math> (initial condition) | ||

| + | |||

| + | One way to numerically solve this equation is to approximate all the derivatives by finite differences. We partition the domain in space using a mesh <math> x_0, ..., x_J </math> and in time using a mesh <math> t_0, ...., t_N </math>. We assume a uniform partition both in space and in time, so the difference between two consecutive space points will be ''h'' and between two consecutive time points will be ''k''. The points | ||

| + | |||

| + | :<math> u(x_j,t_n) = u_j^n </math> | ||

| + | |||

| + | will represent the numerical approximation of <math> U(x_j, t_n). </math> | ||

| + | |||

| + | ===Explicit method=== | ||

| + | |||

| + | Using a [[forward difference]] at time <math> t_n </math> and a second-order central difference for the space derivative at position <math> x_j </math> we get the recurrence equation: | ||

| + | |||

| + | :<math> \frac{u_j^{n+1} - u_j^{n}}{k} =\frac{u_{j+1}^n - 2u_j^n + u_{j-1}^n}{h^2}. \, </math> | ||

| + | |||

| + | This is an [[explicit method]] for solving the one-dimensional [[heat equation]]. | ||

| + | |||

| + | We can obtain <math> u_j^{n+1} </math> from the other values this way: | ||

| + | |||

| + | :<math> u_j^{n+1} = (1-2r)u_j^n + ru_{j-1}^n + ru_{j+1}^n </math> | ||

| + | |||

| + | where <math> r=k/h^2. </math> | ||

| + | |||

| + | So, knowing the values at time ''n'' you can obtain the corresponding ones at time ''n''+1 using this recurrence relation. <math> u_0^n </math> and <math> u_J^n </math> must be replaced by the border conditions, in this example they are both 0. | ||

| + | |||

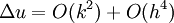

| + | This explicit method is known to be [[numerically stable]] and [[convergent]] whenever <math> r\le 1/2. </math>. The numerical errors are proportional to the time step and the square of the space step: | ||

| + | :<math> \Delta u = O(k)+O(h^2) \, </math> | ||

| + | |||

| + | ===Implicit method=== | ||

| + | If we use the backward difference at time <math> t_{n+1} </math> and a second-order central difference for the space derivative at position <math> x_j </math> we get the recurrence equation: | ||

| + | |||

| + | :<math> \frac{u_j^{n+1} - u_j^{n}}{k} =\frac{u_{j+1}^{n+1} - 2u_j^{n+1} + u_{j-1}^{n+1}}{h^2}. \, </math> | ||

| + | |||

| + | This is an [[implicit method]] for solving the one-dimensional [[heat equation]]. | ||

| + | |||

| + | We can obtain <math> u_j^{n+1} </math> from solving a system of linear equations: | ||

| + | |||

| + | :<math> (1+2r)u_j^{n+1} - ru_{j-1}^{n+1} - ru_{j+1}^{n+1}= u_j^{n} </math> | ||

| + | |||

| + | The scheme is always [[numerically stable]] and [[convergent]] but usually more numerically intensive then the explicit method as it requires solving a system of numerical equations on each time step. The errors are linear over the time step and quadratic over the space step. | ||

| + | |||

| + | ===Crank-Nicolson method=== | ||

| + | Finally if we use the central difference at time <math> t_{n+1/2} </math> and a second-order central difference for the space derivative at position <math> x_j </math> we get the recurrence equation: | ||

| + | |||

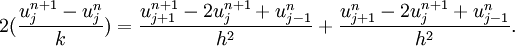

| + | :<math> 2(\frac{u_j^{n+1} - u_j^{n}}{k}) =\frac{u_{j+1}^{n+1} - 2u_j^{n+1} + u_{j-1}^{n}}{h^2}+\frac{u_{j+1}^{n} - 2u_j^{n+1} + u_{j-1}^{n}}{h^2}. \, </math> | ||

| + | |||

| + | This formula is known as the [[Crank-Nicolson method]]. | ||

| + | |||

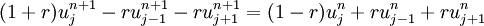

| + | We can obtain <math> u_j^{n+1} </math> from solving a system of linear equations: | ||

| + | |||

| + | :<math> (1+r)u_j^{n+1} - ru_{j-1}^{n+1} - ru_{j+1}^{n+1}= (1-r)u_j^n + ru_{j-1}^n + ru_{j+1}^n </math> | ||

| + | |||

| + | The scheme is always [[numerically stable]] and [[convergent]] but usually more numerically intensive as it requires solving a system of numerical equations on each time step. The errors are quadratic over the time step and formally are of the fourth degree regarding the space step: | ||

| + | :<math> \Delta u = O(k^2)+O(h^4) \, </math> | ||

| + | (in reality, usually near the boundaries, the scheme failed to provide the fourth order accuracy over the space and degenerates to the quadratic errors). | ||

| + | |||

| + | Usually the Crank-Nicolson scheme is the most accurate scheme for small time steps. The explicit scheme is the least accurate and can be unstable, but is also the easiest to implement and the least numerically intensive. The implicit scheme works the best for large time steps. | ||

| + | |||

| + | ==See also== | ||

| + | * [[Newton series]] | ||

| + | * [[Binomial transform]] | ||

| + | * [[Divided differences]] | ||

| + | * [[Finite element method]] | ||

| + | * [[Multigrid method]] | ||

| + | |||

| + | ==References== | ||

| + | * K.W. Morton and D.F. Mayers, ''Numerical Solution of Partial Differential Equations, An Introduction''. Cambridge University Press, 2005. | ||

| + | * Oliver Rübenkönig, ''[http://www.imtek.uni-freiburg.de/simulation/mathematica/IMSweb/imsTOC/Lectures%20and%20Tips/Simulation%20I/FDM_introDocu.html The Finite Difference Method (FDM) - An introduction]'', (2006) [[Albert Ludwigs University of Freiburg]] | ||

| + | *[http://en.wikipedia.org/wiki/Finite_difference Finite Difference article on Wikipedia] | ||

Revision as of 02:25, 14 February 2006

In mathematics, a finite difference is like a differential quotient, except that it uses finite quantities instead of infinitesimal ones.

The derivative of a function f at a point x is defined by the limit

.

.

If h has a fixed (non-zero) value, instead of approaching zero, this quotient is called a finite difference.

Contents |

Calculus of finite differences

One important aspect of finite differences is that it is analogous to the derivative. This means that difference operators, mapping the function f to a finite difference, can be used to construct a calculus of finite differences, which is similar to the differential calculus constructed from differential operators.

Numerical analysis

Another important aspect is that finite differences approach differential quotients as h goes to zero. Thus, we can use finite differences to approximate derivatives. This is often used in numerical analysis, especially in numerical ordinary differential equations and numerical partial differential equations, which aim at the numerical solution of ordinary and partial differential equations respectively. The resulting methods are called finite-difference methods.

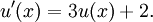

For example, consider the ordinary differential equation

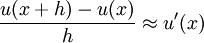

The Euler method for solving this equation uses the finite difference

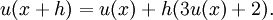

to approximate the differential equation by

The last equation is called a finite-difference equation. Solving this equation gives an approximate solution to the differential equation.

The error between the approximate solution and the true solution is determined by the error that is made by going from a differential operator to a difference operator. This error is called the discretization error or truncation error (the term truncation error reflects the fact that a difference operator can be viewed as a finite part of the infinite Taylor series of the differential operator).

Example: the heat equation

Consider the normalized heat equation in one dimension, with homogeneous Dirichlet boundary conditions

(boundary condition)

(boundary condition)

(initial condition)

(initial condition)

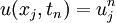

One way to numerically solve this equation is to approximate all the derivatives by finite differences. We partition the domain in space using a mesh  and in time using a mesh

and in time using a mesh  . We assume a uniform partition both in space and in time, so the difference between two consecutive space points will be h and between two consecutive time points will be k. The points

. We assume a uniform partition both in space and in time, so the difference between two consecutive space points will be h and between two consecutive time points will be k. The points

will represent the numerical approximation of

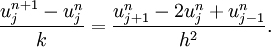

Explicit method

Using a forward difference at time  and a second-order central difference for the space derivative at position

and a second-order central difference for the space derivative at position  we get the recurrence equation:

we get the recurrence equation:

This is an explicit method for solving the one-dimensional heat equation.

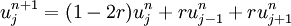

We can obtain  from the other values this way:

from the other values this way:

where

So, knowing the values at time n you can obtain the corresponding ones at time n+1 using this recurrence relation.  and

and  must be replaced by the border conditions, in this example they are both 0.

must be replaced by the border conditions, in this example they are both 0.

This explicit method is known to be numerically stable and convergent whenever  . The numerical errors are proportional to the time step and the square of the space step:

. The numerical errors are proportional to the time step and the square of the space step:

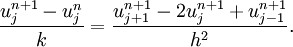

Implicit method

If we use the backward difference at time  and a second-order central difference for the space derivative at position

and a second-order central difference for the space derivative at position  we get the recurrence equation:

we get the recurrence equation:

This is an implicit method for solving the one-dimensional heat equation.

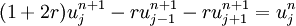

We can obtain  from solving a system of linear equations:

from solving a system of linear equations:

The scheme is always numerically stable and convergent but usually more numerically intensive then the explicit method as it requires solving a system of numerical equations on each time step. The errors are linear over the time step and quadratic over the space step.

Crank-Nicolson method

Finally if we use the central difference at time  and a second-order central difference for the space derivative at position

and a second-order central difference for the space derivative at position  we get the recurrence equation:

we get the recurrence equation:

This formula is known as the Crank-Nicolson method.

We can obtain  from solving a system of linear equations:

from solving a system of linear equations:

The scheme is always numerically stable and convergent but usually more numerically intensive as it requires solving a system of numerical equations on each time step. The errors are quadratic over the time step and formally are of the fourth degree regarding the space step:

(in reality, usually near the boundaries, the scheme failed to provide the fourth order accuracy over the space and degenerates to the quadratic errors).

Usually the Crank-Nicolson scheme is the most accurate scheme for small time steps. The explicit scheme is the least accurate and can be unstable, but is also the easiest to implement and the least numerically intensive. The implicit scheme works the best for large time steps.

See also

References

- K.W. Morton and D.F. Mayers, Numerical Solution of Partial Differential Equations, An Introduction. Cambridge University Press, 2005.

- Oliver Rübenkönig, The Finite Difference Method (FDM) - An introduction, (2006) Albert Ludwigs University of Freiburg

- Finite Difference article on Wikipedia